Workspace Scaling

On this page

Resizing and Scaling Compute

Overview

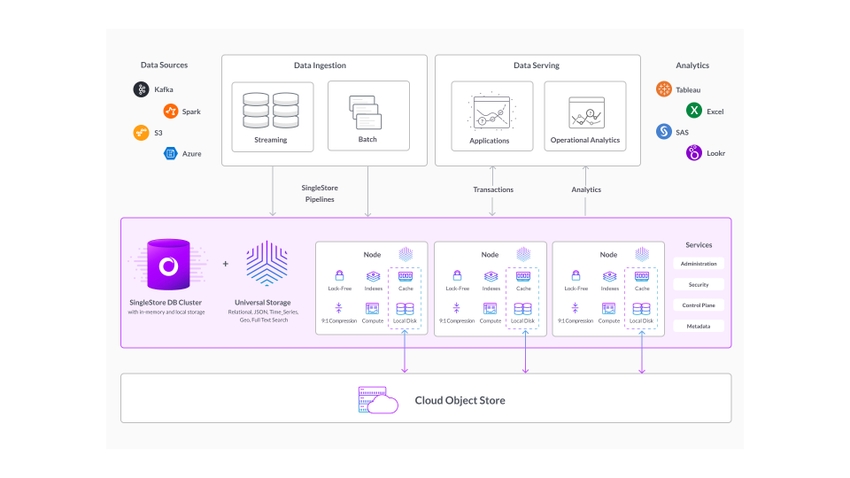

SingleStore Helios has a unique architecture which offers the flexibility to scale resources dynamically for both read and write workloads.

This is because SingleStore Helios is built on a clustered architecture which is distributed across compute resources.

Compute workspaces can be scaled up or down to accommodate changing workloads.

Scaling operations are always online, however connections might drop for various reasons, and an immediate reconnect should always succeed.

How Scaling Works

A SingleStore Helios compute workspace is made up of individual nodes, which allow an even distribution of jobs across the underlying cloud resources.

There are multiple ways to scale resources depending on the workload requirements.Resized, Scaled, or Autoscaled.

Resizing

Resizing is performed by changing the base size of the compute deployment (for example, from S-12 to S-24).

As data is redistributed when resizing, the amount of time to perform a full resize is dependent on the workspace size and the size of the data working set.

Resizing is ideal for workloads which have grown or shrunk over time and are expected to continue operation at the new compute size.

Scaling

Scaling operations are performed by changing the scaleFactor of the deployment.scaleFactor from "1" to "2" or "4".

This feature is designed to scale resources up and down in order to handle dynamic changes in workload needs.

Autoscaling

Autoscaling is designed to track the active compute workload and automatically scale the deployment based on compute and memory usage.

While many databases limit autoscaling to read-replicas, SingleStore has implemented autoscaling to provide both enhanced write and read performance.

When configuring autoscaling users can turn the feature on or off, and set the maximum amount of vCPU and Memory to be provisioned (2x or 4x of the base amount).

Autoscaling is ideal for dynamic workloads where the user does not know when peaks in workload may occur and can be turned on or off for each compute deployment independently.

The default settings for autoscaling may not fit every workload.

Autoscaling provides three sensitivity levels to handle a workload:

-

Low - this is a more conservative scaling, which uses 15 min sampling and 30 minute cooldown.

-

Normal - this is the standard configuration, which uses 5 min sampling and 10 minute cooldown.

-

High - this level is the most sensitive scaling, which uses 3 min sampling and 5 minute cooldown.

As a general rule, it is best to use the Normal setting.

The Low setting can be used when scaling should happen only after sufficient workload has been running for the 15-minute sampling period.

The High setting is recommended when workloads are most dynamic and scaling should occur as often as needed, with minimal cooldown before returning to the base scale factor.

Cache Configuration

Setting the Cache Configuration allows compute deployments to leverage greater volumes of Persistent Cache to increase the amount of data (the working set) that can be accessed with extremely low latency.

Increasing the cache configuration, for example from “1x” to “2x” or “4x”), will increase the overall volume of the cache, and automatically distribute data within the cache.

This operation runs online and data is available to be written and read throughout the reconfiguration process, and cache configurations can be increased or decreased as desired.

Resizing and Scaling

Scaling up or down can be triggered through the Cloud Portal or Management API.

Using Cloud Portal

To scale a workspace through the Cloud Portal, navigate to Deployments > Overview, select the workspace card, open the workspace options menu (⋮), and select Resize Workspace.

Using Management API

Resizing up or down through the management API can be done by using WorkspaceUpdate size.WorkspaceUpdate scaleFactor, and Cache Configuration can be updated with WorkspaceUpdate cacheConfig.

High Availability

Critical workloads need to stay online, even when scaling the underlying resources.

Changing the Database Partition Count

You can use either of the following methods to change the partition count in a database:

-

Use the

BACKUP WITH SPLIT PARTITIONcommand.BACKUP [DATABASE] db_name WITH SPLIT PARTITIONS [BY 2] TO [S3 | AZURE | GCS] "backup_path" [CONFIG configuration_json] [CREDENTIALS credentials_json]For more information about the syntax options, refer to BACKUP DATABASE.

-

Use the INSERT…SELECT command.

In this method, you must first create a new database with the desired number of partitions and then use

INSERT…SELECTto copy the tables from the existing database to the new database.For huge tables that take more than a few minutes to copy (this depends on the amount of data and your system's scale), you should move the rows of the table over in large batches, instead of all at once.

For example, you have a monitoring workspace of S-8 for which the recommended partition count is 64.

In this case, create a new database with the required ideal partition count and use the INSERT…SELECT command to copy the data from the existing database to the new database.

Scaling Impact on Performance

Resizing operations trigger the online addition or removal of compute resources, as well as a redistribution of data to ensure even performance across the compute workspace.

For large deployments with heavy active workloads the time required to complete the resizing operation may increase as a larger volume of active data needs to be redistributed within the deployment.

Billing

Compute consumes compute credits while running.scaleFactor of the workspace.

Resizing and scaling do not affect the storage costs, as storage is charged based on the average number of monthly GB stored, which does not change when deployments are scaled up or down.

Last modified: June 17, 2025